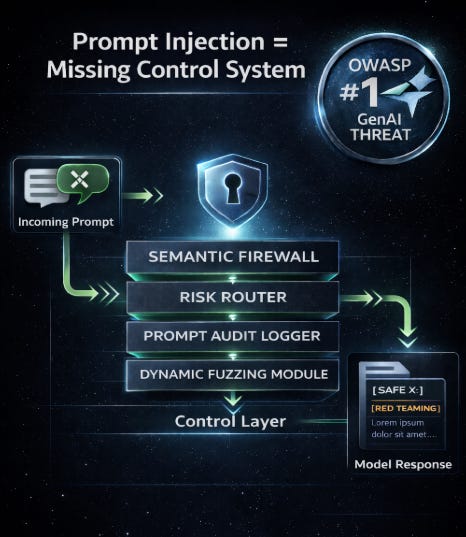

Prompt Injection Isn’t a Bug - It’s a Missing System Layer

You can’t patch your way out of prompt injection - you need a control system that understands language risk.

Introduction: The Mirage of Static Defenses

Most enterprises still treat prompt injection like a solvable input sanitization problem. But the reality is deeper: LLMs aren’t traditional software. They’re non-deterministic systems without execution boundaries - and prompt injection exploits that fundamental openness.

The rise of jailbreaks, agent hijacks and data leakage is not a surprise. It’s a signal: enterprises need security models purpose-built for language-based systems.

The Failure of “One-Shot Defenses”

Here’s what hasn’t worked:

Regex Sanitizers: Break easily, brittle and unaware of semantic attacks

Prompt Guards: Static wrappers without state or memory of past interactions

Blacklists: Can’t keep up with new injection patterns or indirect attacks

Enterprises that rely on these alone often experience:

Sudden failures in high-sensitivity workflows

Undetected data exfiltration through natural language queries

Reputational damage when models are tricked into unsafe responses

Prompt Injection as a Systemic Risk

Prompt injection is not just an application-layer concern. It exposes missing primitives across:

Session Memory Models - What context is retained or leaked

Execution Boundaries - What the LLM is allowed to do

Audit Trails - What the model was told and by whom

Model Routing - Whether low-risk requests can be safely sandboxed

It’s not a bug. It’s the absence of a control system.

What a Real Defense Looks Like

The emerging stack to mitigate injection includes:

Intent-aware Firewalls: Parse and score semantic intent before model execution

Quarantine Routes: Route high-risk prompts to hardened, scoped-down models

Token Attribution: Tag all outputs with source + propagation trace

Dynamic Prompt Fuzzing: Real-time adversarial testing per session

Replayable Prompt Audit Trails: Full reconstruction of prompt → model → response

Think of it less like input validation and more like an LLM-aware runtime.

OWASP and the New Security Normal

Prompt injection is now OWASP GenAI Threat #1. But enterprise responses remain lagging.

Why?

Security teams lack visibility into LLM usage

AppSec tools don’t map to GenAI risk surfaces

“Safe prompting” is too reliant on prompt engineers - not systems

It’s time for AI systems to inherit the maturity of API gateways, firewalls and zero-trust patterns.

Metrics to Track

Injection Escape Rate (IER): How often attackers successfully trick your LLM into doing something it shouldn’t.

Risk-Weighted Prompt Score (RPS): A single score that tells you how dangerous a prompt really is based on intent, context and past attack patterns.

Detection Latency (DL): Time taken to identify and flag a high-risk or escaping prompt from initial ingestion.

Audit Replay Coverage (%): Proportion of LLM interactions that can be fully reconstructed end-to-end for forensic analysis and compliance.

Without this telemetry, compliance is aspirational.

The Case for a GenAI Security Control Plane

What Kubernetes did for containers, GenAI security control planes must do for language systems:

Declare intent boundaries

Route per risk policy

Observe across sessions and agents

Enforce escape detection and response

You don’t “fix” prompt injection. You build a system that makes it survivable.

Conclusion: Secure-by-Default, Not Just Prompt-Hardening

Language is not static. Neither are its risks. Prompt injection is here to stay - but it doesn’t have to be fatal.

Enterprises can move from patching to prevention by treating security as a control layer, not a checklist.

We’re FortifyRoot - the LLM Cost, Safety & Audit Control Layer for Production GenAI.

If you’re facing unpredictable LLM spend, safety risks or need auditability across GenAI workloads - we’d be glad to help.