The AI Inference Arms Race - Why the Right Chip Stack Can Save or Sink Your Budget

Inference hardware isn’t just a speed factor - it’s your biggest silent cost lever in GenAI deployment.

Introduction: Beyond Just GPUs

As GenAI matures, inference costs - not training - dominate enterprise LLM spend. Choosing the wrong hardware stack leads to silent inefficiencies. A 5x latency gap or 70% cost delta per token is entirely possible based on chip selection alone. For many, the default (NVIDIA) is powerful but not always optimal.

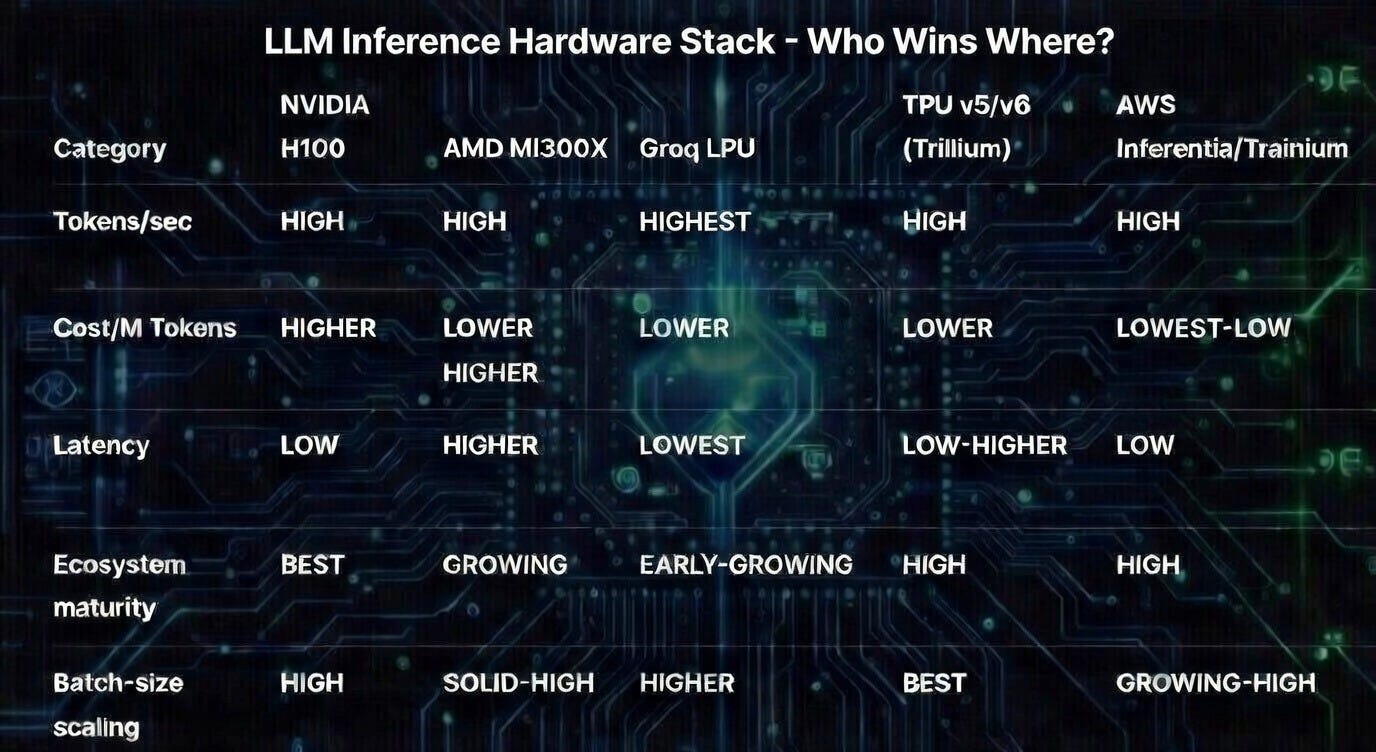

Core Players in Inference Compute

NVIDIA H100/H200: Ubiquitous, CUDA-optimized, supported across cloud platforms. Strong training and inference.

AMD MI300X: 192 GB HBM(High Bandwidth Memory), great memory bandwidth. Microsoft is betting on these for Azure OpenAI workloads.

Google TPUs (v4–v6e): Tight TensorFlow integration. Anthropic’s Claude stack is TPU-optimized. Now they support both PyTorch(via XLA) and JAX.

AWS Inferentia2: Cheaper per-token inference for high-volume tasks. Limited ecosystem.

Groq LPU: 800+ tokens/sec deterministic inference at <$1/M tokens. Extremely fast, but limited to inference only.

Cerebras WSE-3: One-chip large model execution. Ideal for research, extreme batch size.

How the Wrong Choice Hurts

Latency Spikes: High tail latencies mean slower responses to users and support costs go up.

Over-provisioned Memory: Paying for massive HBM you don’t need for small models.

Vendor Lock: Ecosystem rigidity can prevent switching or cost-based rebalancing.

Wasted Throughput: Training-optimized chips used inefficiently for real-time inference.

Metric-Based Hardware Selection

Instead of defaulting to the popular chip, measure:

Tokens/sec @ p95 latency

Effective cost per million tokens

Power usage per inference batch

Throughput at different batch sizes

Benchmark these metrics using your model (e.g., LLaMA 70B, Claude, GPT) against workload type (chat vs classification).

Hybrid Model = Hybrid Hardware

Run high-volume, low-sensitivity tasks on Groq or Inferentia

Use NVIDIA or AMD GPUs for general-purpose, high-customization workloads

Deploy large batch, research-grade LLMs on Cerebras or TPUv5e

Cloud Marketplace Matters

Hardware is only as useful as its availability:

CoreWeave, Lambda: Cheaper GPU rentals

GCP: TPUs for high-performance training/inference using TensorFlow or JAX

AWS: Deep ecosystem but costlier GPU hours

Dedicated colocation: for high-utilization teams

Enterprise Decision Framework

When choosing a stack:

What is your latency requirement?

How variable is your prompt size?

Are models fixed or swappable?

What is your vendor lock tolerance?

Can you split traffic by workload class?

Conclusion: Inference Strategy is a FinOps Lever

Enterprises often assume hardware is a sunk cost. It’s not. For GenAI, inference hardware is a strategic choice - one that materially affects latency, cost-per-output and resilience. Treat hardware choice like a service contract: benchmark, model and revisit regularly.

We’re FortifyRoot - the LLM Cost, Safety & Audit Control Layer for Production GenAI.

If you’re facing unpredictable LLM spend, safety risks or need auditability across GenAI workloads - we’d be glad to help.